Use Case Thread Generation for Acceptance Testing

From Theory to Practice

Doug Rosenberg

ICONIX

Since I’ve been working with (and writing books about) use cases since the early 1990s, it’s not surprising that I’ve been running into the problem of generating acceptance tests from use cases for a couple of decades. Back in the mid-90s, one of my co-workers at ICONIX built something called a “thread generator” as an add-in to one of the popular modeling tools of that era, while working onsite as a consultant for one of our training clients. Thanks to the development team at Sparx systems, this test case generation capability is now available in version 8 of Enterprise Architect.

The basic concept of “thread generation” from use cases is quite simple. I’ll illustrate it with an example later in this article, but here’s a quick summary. Use cases generally have a “sunny day scenario” (the typical sequence of user actions and system responses), and several “rainy day scenarios” (everything else that can happen, like errors, exceptions, and less typical usage paths). When you’re modeling systems with use cases, you make an upfront investment in “writing the user manual” one scenario at a time, with the expectation of recovering your investment later on in the project. If you do it right[1], you virtually always recover your investment multiple times over.

One of the many places you can leverage the investment you’ve made in writing use cases is in acceptance testing. Simply put, if you consider a hypothetical use case with one “sunny day” scenario and four “rainy day” scenarios, you can expand that use case into at least five “threads”, each of which defines a potential acceptance test for some part of your system. There’s one “thread” for the sunny day scenario and others for some portion of the sunny day combined with each of the rainy day scenarios, in turn. When you’ve covered all of the permutations, you’ve got a pretty good start on “black box” acceptance-testing your use case from the user perspective.

So, while I’ve long been aware of this problem and the desirability of solving it, it has always been a manual, labor-intensive process for most companies. However, over the last year, several factors have converged which have now resulted in a remarkable implementation by Sparx Systems as part of the new “structured scenario” capability in Version 8 of Enterprise Architect.

As it happens, I’ve been involved as an instigator, specifier of requirements, and reviewer of the capability, although most of the hard work was done by the team at Sparx. In the remainder of this article, I’ll explain how the capability came about, how it works, and why your company’s Quality Assurance team will be very happy to have it.

Being the grain

of sand in the oyster

Everyone knows that it requires a grain of sand as an “irritant” in order for an oyster to make a pearl. In some respects, I’ve played that role with respect to getting this capability automated – agitating and occasionally irritating the team at Sparx with the result being a gem of a new capability.

Briefly, the story begins with a book I’m currently writing with Matt Stephens called Design Driven Testing[2]. The DDT book is about driving testing from use cases at multiple levels. Some of those levels (for example unit testing with JUnit and NUnit) have already been supported in the Agile/ICONIX add-in that we’ve worked with Sparx to develop over several years.

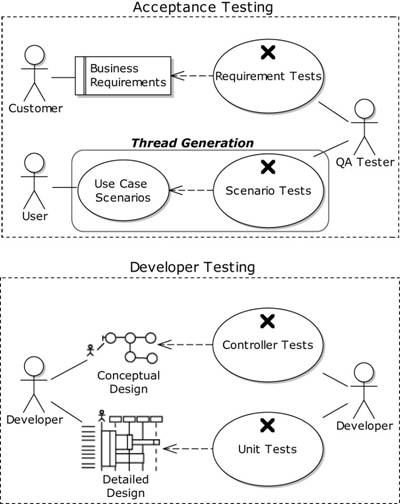

Figure 1: Design Driven

Testing covers both Developer Testing and QA/Acceptance Testing

Since we wanted to write a chapter on scenario-based acceptance testing, we asked the folks at Sparx (who have a relentless drive to improve their product, the likes of which we’ve seldom seen) if they’d be interested in implementing a “thread expander”.

During this time, one of our training clients, a project called the Large Synoptic Survey Telescope, was in communication with us regarding the thread expansion topic, as their Project Manager for Data Management (Jeff Kantor[4]) happens to have worked for ICONIX in a past lifetime (in the mid 1990s) and was in fact responsible for implementing the first incarnation of the “thread expander” way back in the stone ages. To make a long story short, I asked Jeff to produce a specification for the thread generator, sent it over to Sparx, and the result is now available within the structured scenario editor in EA 8.

The Sparx structured scenario capability includes a lot more than the “thread expansion” capability discussed in this article[5], but test generation is certainly one of it’s key aspects.

So, what’s a use

case thread expander, and why do I need one?

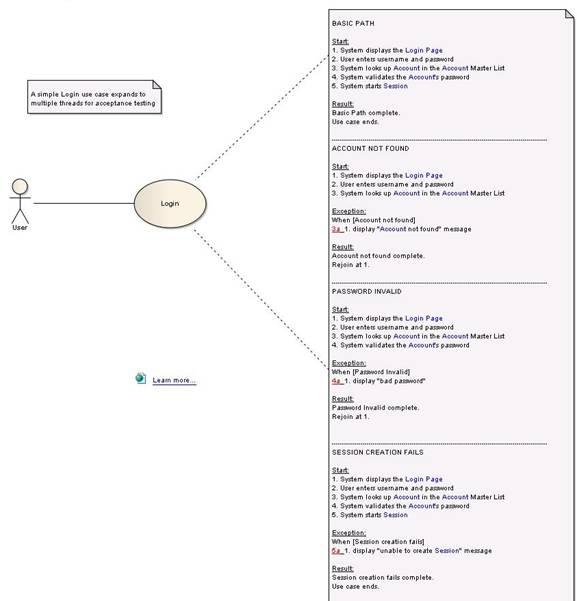

Let’s return to our hypothetical use case, with one “sunny day” and four “rainy day” scenarios. Except now let’s make it a Login use case, with the sunny day path resulting in the user logged in successfully, and rainy day paths for the user clicking cancel, invalid passwords, incorrectly entered account names, and the system being unable to start a session.

For acceptance testing purposes, we’ll want to expand this use case into five “threads”, as shown in Figure 2. Each of these threads defines a script that can be followed by the Quality Assurance team during independent QA testing of a system. Simply put, when we’ve tested all the threads for all the use cases of a system, then we know that our system meets the “behavior requirements” that have been agreed to by all parties during the analysis/design stage of a software release.

As you can see, even a simple Login use case can have quite a bit of behavior associated with it. In many organizations these scenarios will be tested by a QA organization, but there’s nothing to stop developers from scenario-testing their own code if they want to. Regardless of who does the testing, it’s important that all the threads get tested.

Figure 2: Acceptance tests need to verify all “sunny-day/rainy-day threads” of a use case

Four steps from

use case to scenario tests

We’re writing a detailed description of the steps from use cases to scenario tests in the Scenario Testing chapter of Design Driven Testing, and in the Structured Scenarios eBook, but for this article we’ve boiled it down to four main steps:

1)

Start with a

narrative use case

2)

Convert the

use case to a structured scenario, add detail for testing

3)

Check your

structured scenario carefully

4)

Generate

scenario tests, and show them on a test case diagram

We’ll discuss each of these in turn, and illustrate with our Login use case, in the remainder of this article.

Start with a

narrative use case

If you’ve ever used software that’s cumbersome, difficult to use, or doesn’t seem to work quite right (and all of us have), you’re almost certainly using software that didn’t start out with somebody writing a good narrative “user manual style” use case.

The easiest way to think about writing narrative style use cases is simply “write the user manual before you write the code”. Writing the user manual (in use case form) forces developers to think through the user experience in detail before the code gets written. Simply put, this is important because once the code is written, it’s usually too late.

There’s plenty of detail on how to write good “ICONIX style” narrative use cases in Use Case Driven Object Modeling – Theory and Practice, but for now we’ll just assume we have one, and present it here in Figure 3. You’ll notice that we wrote our original narrative use case in the “General” tab of the use case specification dialog.

Figure 3 – A narrative

“ICONIX style” use case for Login

Convert the Use

Case to a Structured Scenario, add detail for testing

We’ll skip over the ICONIX Process analysis, design, and coding activities[6] for our Login use case for the purposes of this article, and get directly to the point where we’d like to define scenario tests so that our QA department can independently verify that the developers have implemented Login correctly. To do this we’ll convert our narrative use case to a structured scenario. Without going into all the details, the result should look like Figure 4 (note that we’re now using the Scenarios tab of the use case spec).

Figure 4 – Structured

Scenario for Login

You’ll notice several differences between the structured scenario and the narrative version of the use case. These include:

This additional information, along with the use case’s Preconditions and Postconditions, is useful and necessary when preparing to generate scenario tests. However, if you try to specify all of it immediately up-front without writing the narrative use case first, you might get bogged down during analysis and design. So you can think of the process of converting from a narrative use case to a structured scenario as “preparing the use case for test generation”.

Check your

structured scenario carefully

It’s possible to make mistakes when converting from a narrative use case to a structured scenario (and sometimes you discover errors in the narrative use case).

Two common mistakes are:

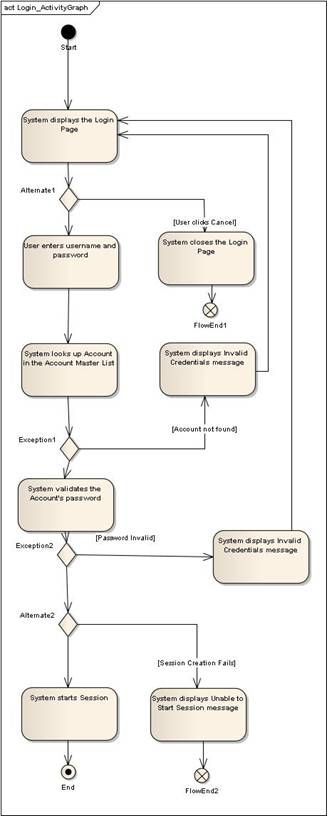

Enterprise Architect makes it easy for you to detect these errors by automatically generating an Activity Diagram that shows the path logic, with branching.

Figure 5 shows an activity diagram we’ve generated for Login. Our experience has shown that checking your structured scenario in this manner leads to better results in test case generation, as the test scenarios won’t generate correctly if the path logic is wrong.

Figure 5 – Generating an

Activity Diagram verifies the logic of your structured scenario

Generate Scenario

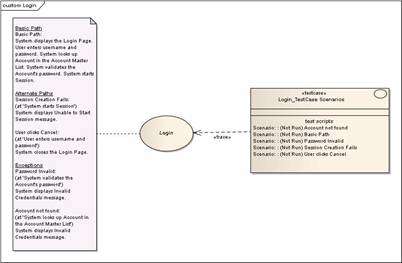

Tests and show them on a Test Case Diagram

Once you’ve checked your logic, it’s a simple matter to generate “thread tests” for your structured scenario (although it’s not always simple to find the tests you’ve generated). We used the Generate External Tests option, and created the following diagram showing a note with the reconstructed “whole use case view”, and the test case in “rectangle notation”. The resulting diagram is shown in Figure 6.

/p>

/p>

Figure 6 – A test case

diagram makes it easy to find your scenario tests.

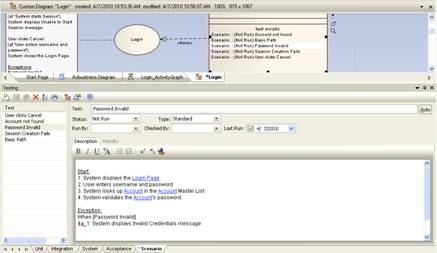

Once you’ve created the test case and placed it on the test case diagram, you can simply double-click on any of the scenarios within it to bring up EA’s testing view, as shown in Figure 7. From here you can add additional detail that’s useful for generating test plans for your QA department.

Figure 7 – Drilling into the

EA Testing view from your test case diagram

Summary

This article has provided a high level overview of acceptance test case generation from use cases, using the “thread expansion” capability that exists within the “structured scenario editor” in version 8 of Enterprise Architect. We’ve skipped over a lot of details in this article, partly because the software is still in betatest at the time of writing, and partly because there are just too many details to include in a short article. Additional details are forthcoming in both the Design Driven Testing book and the Structured Scenarios eBook that are now under development.

The author welcomes feedback and further inquiries contact us.

[1] For some tips on doing it right,

consult “Use Case Driven Object Modeling, Theory

and Practice”

[2] Our publisher, Apress, has made

pre-publication chapters available as an “alpha book” here.

[3] The Agile/ICONIX add-in is a free

download from the Sparx website here.

[4] You can read more about Jeff and the

LSST project in the eBook “20

Terabytes A Night ”

[5] I’ll be describing the structured

scenario capability in more detail in an upcoming eBook: Structured Scenarios, a New Paradigm for Use Case Driven Development. When completed, the eBook will be posted here.

[6] Although we certainly don’t recommend

that you skip over them on your project!